Field Notes Week 177/520: The Child & The Platform

These notes are shaped by what I’m seeing, building, and discussing as our physical and digital lives continue to converge.

Welcome to this week’s Field Notes, a 10-year project of mine documenting humankind’s digital transition from the field. These notes are shaped by what I’m seeing, building, and discussing as our physical and digital lives continue to converge.

- Ryan

(Connect with me on LinkedIn)

News is surface-level. Signals live underneath. This section captures developments that hint at deeper shifts in how digital systems are being built, governed, and adopted — often before they’re obvious in the mainstream narrative.

Two stories this week suggest the next phase of platform regulation may be less about speech in the abstract and more about childhood as contested digital territory.

Europe is moving against addictive design, not just harmful content

Reuters reported on 12 May that Ursula von der Leyen said the European Commission would target the “addictive and harmful design practices” of platforms such as TikTok, Meta and X through the coming Digital Fairness Act. The proposal could include a minimum age for social media access, would ban manipulative practices and addictive features, and is expected to expand on the Digital Services Act rather than replace it. Von der Leyen explicitly linked endless scrolling, autoplay, push notifications, depression, self-harm and exploitation in describing why the Commission wants stronger protections for minors.

What stood out is the change in regulatory posture. For years, the dominant framing in Europe was around illegal content, moderation failures, or competition concerns. This looks different. The object of scrutiny is increasingly the design logic itself. Not only what platforms host, but how they capture attention and shape behaviour. That feels like a more structural move, because design choices are closer to the business model than content policies ever were.

Families are taking the youth-safety fight into court

Reuters reported on 14 May that an Italian parents’ group, MOIGE, and a group of families faced Meta and TikTok in the first hearing of a Milan class injunctive action seeking to restrict minors’ access to social media. The lawsuit asks the court to require stronger age-verification systems for users under 14, the removal of potentially manipulative algorithms, and clearer information about the harms of overuse. MOIGE says it wants to protect around 3.5 million Italian children aged 7 to 14 who it believes are illegally active on social media platforms.

That matters because it shifts the youth-safety story from regulatory signalling to legal confrontation. Once families and advocacy groups start bringing product-design questions into business courts, the argument changes. It is no longer only about what governments may do in the future. It is about whether existing institutions can force operational redesign now. The court has not ruled yet, but the fact of the case already signals that platform design is becoming litigable in a more direct way.

What it is

This week’s watch is “Meta found guilty of harming children’s mental health — can this change Meta’s way?” from DW News.

The video pulls together two landmark rulings in New Mexico against Meta and places them inside the wider wave of litigation now building against social media companies in the United States. One ruling centres on harm to children’s mental health. The other focuses on allegations that Meta concealed what it knew about child sexual exploitation on its platforms. The report then broadens into a discussion with legal and policy commentators about Section 230, product design, algorithmic accountability, and whether the current moment could become a turning point for regulation.

What stood out

What stood out is that the argument is shifting from content to design.

The New Mexico verdict reportedly imposed a $375 million fine and turned on the claim that Meta prioritised profit over child safety. That matters because it treats harm not as an unfortunate byproduct of user behaviour, but as something tied to how the system was built and optimised. The interviews in the second half of the video sharpen that point. The comparison to tobacco litigation is particularly useful. Not because the industries are identical, but because the legal logic starts to look familiar once internal knowledge, public harm, and business incentives begin to line up in the same frame.

The Austrian segment at the end also changes the texture of the story. The experiment involving 70,000 children going three weeks without smartphones makes the issue feel less theoretical. It points to a broader international recognition that screen dependency and platform design are no longer fringe concerns. They are becoming matters of public health and civic policy.

Why it lingers

It lingers because it suggests the social media debate may be entering a new phase.

For years, platform accountability often centred on moderation failures, misinformation, or abstract discussions about online safety. This video points somewhere more foundational. The focus is moving toward product architecture itself: algorithms, engagement loops, recommendation systems, and the way these interact with children’s attention and mental health. Once that shift happens, the legal and regulatory stakes get higher. It becomes harder for platforms to argue that they are merely neutral hosts.

The more important signal is that the child is becoming one of the main sites through which platform legitimacy is now being tested. That changes the terrain. Not just a policy problem. Not just a parenting problem. A design problem, a liability problem, and increasingly a systems problem.

Digital assets now sit less as an idea and more as infrastructure in progress. As physical and digital life continue to converge, money and assets are doing the same. What was once framed as “crypto” is increasingly showing up as rails, balance sheets, and policy conversations.

🔥🗺️Heat map shows the 7 day change in price (red down, green up) and block size is market cap.

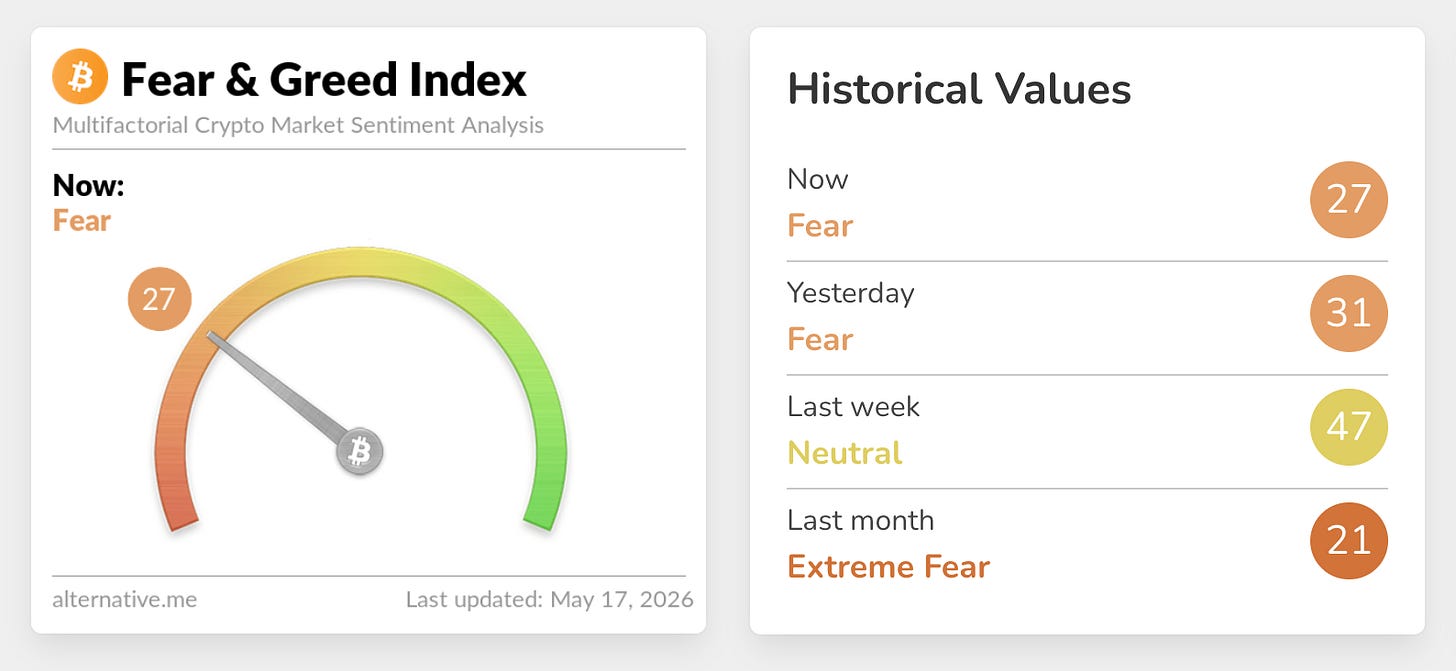

🎭 Crypto Fear and Greed Index is an insight into the underlying psychological forces that drive the market’s volatility. Sentiment reveals itself across various channels—from social media activity to Google search trends—and when analysed alongside market data, these signals provide meaningful insight into the prevailing investment climate. The Fear & Greed Index aggregates these inputs, assigning weighted value to each, and distils them into a single, unified score

This section captures developments at the edge of digital systems. New interfaces, tools, and capabilities that feel early, unfinished, or slightly ahead of their moment. I’m less interested in what’s impressive today and more interested in what might quietly reshape how people work, coordinate, and interact over time.

The next platform fight may be over the child, not the user.

Frontier technology is usually described in technical terms. A new model. A new interface. A new device. But sometimes the frontier shows up somewhere less obvious, in the institutions that sit around the technology rather than inside it. That feels like the more interesting way to read this week’s story about Meta and Google funding children’s organisations while facing scrutiny over the effects of their products on minors.

On the surface, it looks like a reputational story. Reuters reported that the companies have provided millions of dollars to groups such as Sesame Street, Girl Scouts, and Highlights, helping fund materials on moderation, screen use, and digital literacy, even as critics argue their platforms remain structurally optimised for engagement and dependence. (reuters.com)

What stood out is that this is not only a communications strategy. It is a systems strategy. If regulators are starting to focus less on content and more on design, then the companies involved have to do more than defend individual features. They have to shape the environment in which harm is understood. That means influence does not stop at the product. It extends into the social layer around the product: schools, parents, children’s brands, trusted intermediaries, and the language through which safety is discussed.

That is what makes this feel like Frontier Tech. Because the frontier here is not just technical capability. It is the growing ability of platforms to reorganise the institutions that interpret them. Once a product becomes embedded enough, the battle shifts. No longer only “what does the technology do?” but “who gets to explain what it means, what counts as harm, and what kind of response is considered reasonable?”

In that sense, the child becomes a strategic boundary object. Not just a protected user group, but the point at which design, regulation, education, family authority, and corporate legitimacy all meet. Reuters reported that critics, including pediatricians and child-safety advocates, argue these partnerships risk redirecting attention toward family habits and media literacy rather than the underlying engagement mechanics of the platforms themselves. That matters because it changes the centre of gravity. Responsibility moves outward, away from the system and toward the people trying to live inside it.

This may be the more important signal. As digital systems mature, they stop competing only through features. They begin competing through the governance layers around those features. Through policy. Through pedagogy. Through trust. Through the institutions that help society decide what is normal, what is risky, and what should be tolerated.

Still early. But worth noting. Because one of the clearest signs that a technology has become infrastructural is that the struggle around it no longer happens only inside the product. It spreads into the institutions that raise, teach, and protect the people expected to use it.

“Children are the living messages we send to a time we will not see.”

John F. Kennedy

John F. Kennedy was the 35th president of the United States, serving from 1961 until his assassination in 1963. Before the presidency, he served in the U.S. House of Representatives and then the U.S. Senate, and his public image was closely tied to ideas of generational renewal, civic duty, and the future America might yet build.

That is part of why this line works so well. Kennedy often spoke in long-horizon terms, with an eye toward what one generation leaves behind for the next. Read that way, the quote is not sentimental. It is structural. Children are not just dependants or future consumers. They are the way a society carries its values forward into a world it will not live to see.

It also lands neatly with this week’s themes. If platforms, institutions, and technologies are all competing to shape childhood, then they are also competing to shape the messages being sent forward. That makes the design of these systems feel less like a narrow policy question and more like a civilisational one.