Field Notes Week 171/520: Naming the Asset, Approving the Agent

These notes are shaped by what I’m seeing, building, and discussing as our physical and digital lives continue to converge.

Welcome to this week’s Field Notes, a 10-year project of mine documenting humankind’s digital transition from the field. These notes are shaped by what I’m seeing, building, and discussing as our physical and digital lives continue to converge.

- Ryan

(Connect with me on LinkedIn)

News is surface-level. Signals live underneath. This section captures developments that hint at deeper shifts in how digital systems are being built, governed, and adopted — often before they’re obvious in the mainstream narrative.

Regulators draw a line between digital commodities and securities

What it is. On 17 March 2026 the U.S. Securities and Exchange Commission and Commodity Futures Trading Commission issued a joint 68‑page interpretive release that puts longstanding classification debates on paper. The release explicitly lists sixteen crypto assets—including Bitcoin, Ether, Solana, XRP, Dogecoin and several others—as “digital commodities,” which means that activities like mining, staking and certain airdrops fall outside securities law. The agencies organised crypto assets into five buckets (digital commodities, digital collectibles, digital tools, stablecoins and digital securities) and described the document as a first step toward a harmonised market‑structure framework. The same week, senators Thom Tillis and Angela Alsobrooks said they had reached an agreement in principle with the White House to move the long‑stalled Clarity Act forward by revising stablecoin provisions to address banks’ concerns about deposit flight. The bill aims to codify the commodity‑versus‑security line and settle a digital‑asset market structure that has lingered in limbo.

What stood out. Instead of sweeping statements, the interpretive release provides a list. Sixteen named tokens are described as commodities because their value arises from the programmatic operation of a network rather than promises from a managerial effort. The document also clarifies that protocol mining and staking activities are administrative functions, not investment contracts. This level of specificity signals a shift from principle‑based debates toward operational definitions. The agencies acknowledge the interpretation is not binding law and tie it to broader legislative efforts, including a memorandum of understanding signed a week earlier to coordinate oversight. Meanwhile, the senators’ compromise on the Clarity Act centres on stablecoin yields—crypto companies want to offer rewards, banks fear a flight of deposits—and underscores how deposit‑funding mechanics, not token prices, are driving negotiations. The White House’s push to resolve the stalemate shows political capital is being spent on defining market plumbing.

Why it lingers. The joint release does not settle the matter. Interpretations can be rescinded, and the Clarity Act still needs to clear multiple committees before becoming law. Yet the document’s taxonomy and the senators’ agreement reduce ambiguity for developers, exchanges and compliance teams who have been operating under shifting enforcement positions. The focus on deposit flight and stablecoin yields hints that financial stability concerns are now intertwined with digital‑asset regulation, moving the conversation from speculative fervour to balance‑sheet risk. In a decade‑long view, this moment may read less like the birth of a new asset class and more like the slow stitching of digital assets into existing regulatory fabrics. The process remains uneven, but the act of naming things is a precondition to building durable infrastructure.

Binding AI agents to human approval

What it is. On 20 March 2026 Yubico and Delinea announced an integration that ties non‑human identity governance to physical human presence. The companies combined Yubico’s hardware‑rooted role‑delegation tokens with Delinea’s centralized identity governance and StrongDM’s runtime authorization to ensure that high‑risk operations executed by an AI agent carry cryptographic proof that a verified human approved them. The tokens are backed by YubiKey hardware and can record which person authorised a specific action under defined constraints. Delinea’s platform layers policy enforcement across both human and non‑human identities, supplying just‑in‑time authorization and audit trails. The offering is framed as a response to the proliferation of autonomous agents performing privileged tasks across software factories, cloud infrastructure and developer pipelines.

What stood out. The integration acknowledges a rapidly emerging class of identities—AI agents—that current identity systems were not designed to handle. Software‑only controls can authenticate an agent but cannot prove that a human actually approved the action. Hardware attestation alone does not evaluate policy or enforce scope at scale. By binding the two, Yubico and Delinea are effectively saying that accountability is now as important as authentication. Their argument treats human approval as a verifiable credential, not a checkbox, and embeds it into a chain of custody for code changes, infrastructure provisioning or other sensitive operations. The announcement also references a NIST comment period on AI‑agent identity and authorization, signalling that standards bodies are paying attention.

Why it lingers. As AI systems move from recommendation engines into operational roles, the question of “who is responsible” becomes a design constraint. Requiring cryptographically verifiable human approval may feel burdensome today, but it introduces a pattern: non‑human actors will need to be anchored to human accountability for as long as regulators, insurers and customers demand it. The idea of binding an AI agent’s action to a specific person challenges the notion of “set and forget” automation and may slow certain efficiencies. Yet it also closes a gap in the governance stack by turning authorisation into a first‑class citizen. If AI agents are to become infrastructural, the friction between autonomy and accountability will be an early condition rather than an anomaly.

What it is

A short explainer on the proposed “Clarity Act” in the United States, aimed at defining how digital assets are classified and which regulators oversee them.

What stood out

The focus is not innovation. It is classification. Whether a token is treated as a security or a commodity determines the entire regulatory pathway. The bill attempts to draw clearer lines between agencies, particularly the SEC and CFTC, and reduce the current ambiguity that has shaped much of the industry’s development.

There is also a subtle shift in tone. Digital assets are no longer being debated as fringe instruments. They are being integrated into existing regulatory frameworks, even if those frameworks are still adjusting.

Why it lingers

Regulation often arrives after the fact, but it sets the conditions for what can scale. If the Clarity Act succeeds in establishing workable definitions, it may do less to accelerate innovation directly and more to remove hesitation from institutions that have been waiting on the sidelines.

At the same time, clarity in one jurisdiction can create fragmentation globally. As different regions define digital assets in different ways, interoperability becomes a legal question as much as a technical one.

Still unclear whether alignment will emerge, or whether digital finance will continue to develop across multiple, partially incompatible regulatory regimes.

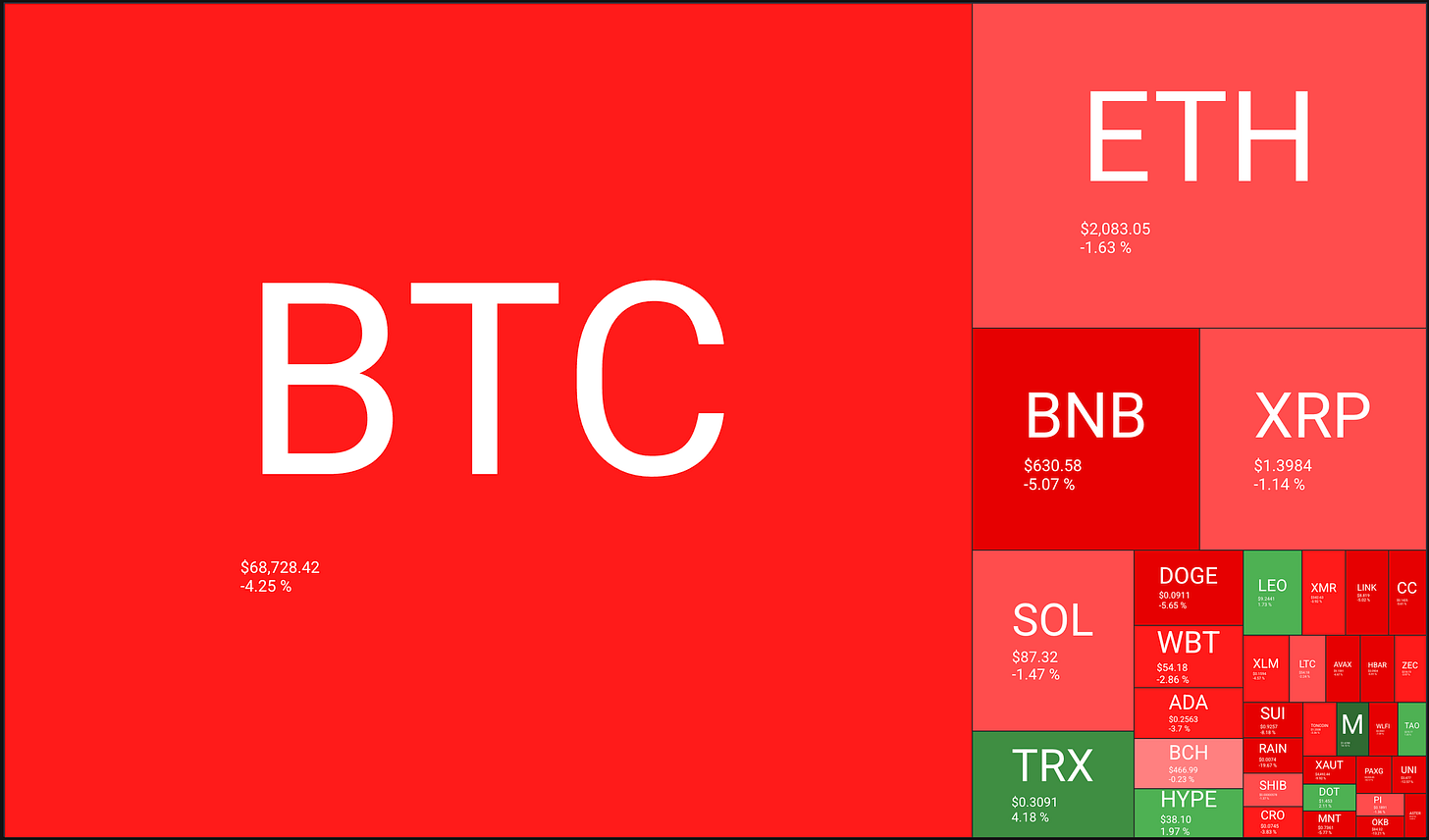

Digital assets now sit less as an idea and more as infrastructure in progress. As physical and digital life continue to converge, money and assets are doing the same. What was once framed as “crypto” is increasingly showing up as rails, balance sheets, and policy conversations.

🔥🗺️Heat map shows the 7 day change in price (red down, green up) and block size is market cap.

🎭 Crypto Fear and Greed Index is an insight into the underlying psychological forces that drive the market’s volatility. Sentiment reveals itself across various channels—from social media activity to Google search trends—and when analysed alongside market data, these signals provide meaningful insight into the prevailing investment climate. The Fear & Greed Index aggregates these inputs, assigning weighted value to each, and distils them into a single, unified score.

This section captures developments at the edge of digital systems. New interfaces, tools, and capabilities that feel early, unfinished, or slightly ahead of their moment. I’m less interested in what’s impressive today and more interested in what might quietly reshape how people work, coordinate, and interact over time.

Edge AI is moving onto the device

For a long time, devices mostly watched and reported. They captured data, passed it upstream, and waited for the cloud to decide what came next. That architecture still dominates. But it is starting to loosen.

What stood out this week is not a single breakthrough model or gadget. It is the growing sense that local inference is becoming practical enough to change behaviour. Microsoft put it plainly in a recent post aimed at developers: the NPU is now a practical target for AI workloads, which means faster results, lower latency, and the ability to work offline or even in airplane mode. Cloud does not have to be the default anymore. (TECHCOMMUNITY.MICROSOFT.COM). That sounds incremental. It may be more than that.

The privacy case is familiar. The European Data Protection Supervisor defines on-device AI as inference and continuous training performed directly on end devices, close to where the data is generated, rather than in the cloud. The benefits are obvious enough: lower latency, more real-time responsiveness, and less need to move sensitive data elsewhere. (European Data Protection Supervisor).But the more interesting shift is architectural.

When models run locally, a device stops being a thin client to a distant system. It starts to become a small decision-making environment in its own right. That changes product design. It changes failure modes. It changes what “offline” means. Offline stops being a degraded state and starts to look more like a design choice.

There are still real constraints. On-device systems remain heavily limited by memory bandwidth and power budgets. One recent industry analysis argued that this is the real bottleneck for on-device large language models, more than raw TOPS figures, because generating each token still requires moving model weights through much narrower local memory channels than those available in data centres. (Edge AI and Vision Alliance)

That matters because it explains the current shape of the market. We are not seeing the cloud disappear. We are seeing tasks split more carefully. Small, frequent, latency-sensitive, privacy-sensitive workloads move outward. Heavier reasoning and coordination still sit elsewhere. In other words, intelligence is becoming more distributed.

There is also a behavioural layer to this. Once a device can process more locally, users begin to expect it to respond with less friction. Fewer loading states. Fewer handoffs. Less visible dependence on network conditions. The device starts to feel more autonomous, even when the wider system is still hybrid. This feels directionally important.

Recent edge AI coverage has described 2026 as an inflection point, driven by rising cloud costs, better silicon, and the move from pilot deployments into mass-market devices. Even allowing for some industry enthusiasm, the underlying logic is sound: if local hardware gets good enough, sending everything away starts to look less like necessity and more like habit. (Internet of Things News). That may be the real signal here.

The frontier is not just that AI is getting smaller. It is that more decision-making is moving closer to the point where behaviour happens. The network is still there. The cloud is still there. But the balance is shifting, and nce systems begin to think closer to where they act, a lot of other assumptions start to move with them

“Technology is a useful servant but a dangerous master.”

Christian Lous Lange

Christian Lous Lange was a Norwegian historian, diplomat, and Nobel Peace Prize laureate, working in the early 20th century at a time when industrial systems were beginning to reshape geopolitics and society at scale. His perspective came from watching coordination systems expand faster than the institutions designed to govern them.

The line lands because it is not anti-technology. It is about alignment. Tools extend capability, but they also embed assumptions, defaults, and incentives. Left unattended, those begin to shape behaviour in return.